Introduction: AI’s Role in Misinformation and Manipulation

Artificial intelligence drastically alters the societal landscape, far surpassing its role as a mere technological innovation. The ubiquitous presence of AI-generated content on social media elevates the risk of manipulation to unprecedented levels. No longer confined to experts, these potent tools are now within the reach of the average person, amplifying potential harms. Malicious actors leverage complex machine learning models to craft incredibly convincing synthetic media, making the distinction between reality and falsehood increasingly elusive. This blurring of lines leads to a critical crisis—jeopardizing public awareness and encroaching on cognitive liberty.

The implications of this digital manipulation are far-reaching and deeply concerning. Real-time data and AI-generated images or text can distort our perceptions of reality, eroding foundational trust in public discourse. In this complex landscape, freedom of expression faces an insidious adversary. The injection of AI into our information ecosystems creates fertile ground for misinformation, with harmful effects that ripple through society. Thus, it is imperative to carefully scrutinize the algorithms that shape our worldview. Simultaneously, we must ardently advocate for transparency in AI development. Concurrently, it’s essential to engage in meaningful conversations aimed at bolstering public awareness. The integrity of our democratic society hinges on these actions.

Table Of Contents

- Introduction: AI’s Role in Misinformation and Manipulation

- AI-Generated Fake News: A Growing Threat to Public Trust

- Algorithms and Echo Chambers: Reinforcing Misinformation

- Deepfakes and Misinformation: The Perfect Storm

- Manipulating Public Opinion: AI in Political Campaigns

- Ethical Concerns: Who Controls the Narrative?

- The Danger to Democracy: A Crisis of Informed Consent

- Emotional Manipulation: AI’s Impact on Perception and Bias

- Privacy Concerns in Data Mining and Targeted Messaging

- Automated Decision-Making: A Breeding Ground for Bias

- Loss of Critical Thinking Is Misinformation’s Gain

- Corporate Interests: AI for Profit Over Truth

- Conclusion: Safeguarding Truth in the Age of AI Manipulation

- References

AI-Generated Fake News: A Growing Threat to Public Trust

AI dramatically elevates fake news production and dissemination. Machine learning crafts text that convincingly mimics human output. In elections, the implications are seismic. Malicious users exploit machine learning to skew public sentiment and consumer choices. Often targeted, public figures see reputations ruined quickly. The damage to public trust is extensive and worrisome. Social media companies could be part of the solution, but their tool deployment has been spotty. As a result, AI-fueled fake news continues as a pressing concern, straining the confines of free speech and human rights.

This issue undermines the integrity of democratic processes. It places an unprecedented strain on our ability to discern truth, thus jeopardizing informed public debate. With each passing election cycle, the risks escalate, and our democratic institutions are put to the test. We’re facing a new breed of challenges in upholding freedom of expression without enabling harmful distortions. Social media companies must act more consistently to defend the values they claim to uphold.

Algorithms and Echo Chambers: Reinforcing Misinformation

Social media algorithms are designed to keep users engaged, but they also trap them in echo chambers. These algorithmic bubbles amplify pre-existing beliefs, leading to increased political polarization. Machine learning capabilities enable these algorithms to predict and influence human behavior more effectively than ever. In doing so, they elevate the risk of manipulation, especially during critical times like national elections. Malign actors use AI-generated content to exploit these algorithmic vulnerabilities, further reinforcing misinformation. The result is a distorted public discourse, which becomes a breeding ground for harmful content. Public awareness efforts have been insufficient to break these echo chambers, making it easier for misinformation to spread like wildfire.

Deepfakes and Misinformation: The Perfect Storm

Deepfakes, powered by Generative AI and machine learning, radically escalate manipulation tactics. These AI-crafted faces and voices closely mimic real people, making them potent weapons for malicious actors. Deployed to spread misinformation, the risks are acute, notably against public figures or during crises. Detection mechanisms lag behind the fast-paced evolution of synthetic media. The psychological toll is significant, altering how we view reality itself. In a world where visual evidence holds sway, deepfakes shake our foundational beliefs in truth and trust. This technology exploits our cognitive vulnerabilities, raising ethical concerns that extend far beyond individual deceit.

Deepfakes erode the integrity of public discourse, fueling political polarization and public skepticism. Social media platforms, often the primary distribution points for deepfakes, have been sluggish in deploying effective countermeasures. Current regulatory frameworks are inadequate, leaving society exposed to a barrage of AI-enhanced disinformation. The average person, with limited access to detection tools, becomes an easy target. Deepfakes thus deepen the crisis of informed consent, which is vital to democratic governance. As Generative AI continues to evolve, the line between real and fake is increasingly blurred, posing unprecedented challenges to human rights and social cohesion.

Manipulating Public Opinion: AI in Political Campaigns

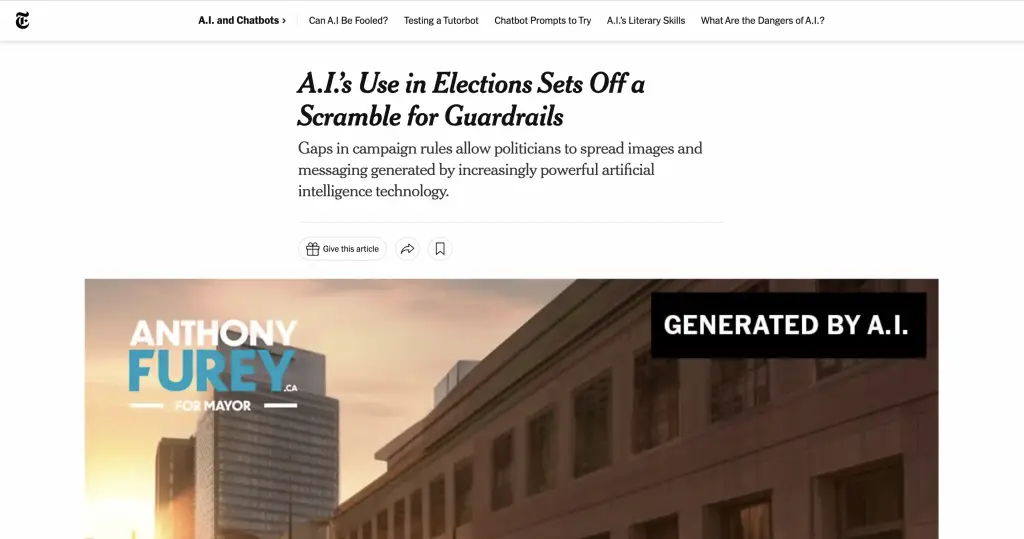

Political campaigns now heavily use AI tools to sway public opinion. AI-generated text and machine learning algorithms dissect voter sentiment with precision. Language models sift through big data to tailor messages for specific voter groups. In presidential elections, the stakes are higher, and AI-enhanced disinformation becomes a powerful weapon. Malicious actors fabricate fake accounts and social media posts to stir confusion and distrust.

These tactics chip away at cognitive liberty. The freedom to think and make informed decisions is compromised. This is not just about misleading people; it’s about hacking the decision-making process itself. Fake news and manipulated content don’t merely provide wrong answers; they corrupt the questions we think to ask. In a democratic society, informed consent is key. Voters need accurate information to make choices that align with their values and needs.

AI tools exploit the vulnerabilities in our cognitive processes. They manipulate emotions and perceptions, altering the landscape of public discourse. This changes the way we engage in discussions, debates, and, ultimately, how we vote. The risk of manipulation is not just theoretical; it’s happening in real time, affecting real people and real elections.

This poses a significant danger to democracy. The principle of informed consent is eroding, replaced by a distorted reality crafted by algorithms and bad actors. The need for regulations to manage these AI tools is urgent. Democracy demands an electorate capable of critical thought and informed decision-making. Without immediate action, the essence of democratic choice is at risk.

Also Read: AI and Election Misinformation

Ethical Concerns: Who Controls the Narrative?

The narrative around any social or political issue is increasingly controlled by algorithms. Social media platforms and digital manipulation techniques have outsourced our critical thinking to AI, a dangerous abdication of cognitive liberty. Ethical questions abound, particularly around freedom of expression and human rights. Who decides what content is harmful or not? What is the role of AI-generated synthetic media in shaping public awareness? The potential risks associated with ceding control to autonomous systems are too great to ignore. Corporate interests often prioritize profits over truth, making it even more vital to scrutinize who controls these powerful AI tools.

The Danger to Democracy: A Crisis of Informed Consent

The peril artificial intelligence poses to democratic governance can’t be overstated. In the complex ecosystem of information dissemination, the role of AI has grown exponentially, often eclipsing human agency. The use of AI in manipulating public opinion, especially during elections, is particularly alarming. Language models and machine learning techniques have been employed to craft AI-generated content that’s almost indistinguishable from what a real person might say or write.

These sophisticated tools are often used by bad actors both inside and outside of national borders. During the presidential election, the surge in AI-generated fake images and synthetic media capabilities added a new dimension to the challenge of maintaining public trust. The most malicious actors employ AI-enhanced disinformation campaigns that easily sway public opinion, creating a crisis of informed consent. The citizenry, deluged by online disinformation, struggles to distinguish fact from fiction.

One of the most striking aspects of this crisis is the role of social media platforms. Their algorithms have been implicated in amplifying false narratives and deepening political polarization. Digital platforms have become the perfect vehicles for delivering targeted misinformation, aided by advanced machine learning algorithms. These algorithms create echo chambers where like-minded individuals receive reinforcing, but often false, information. It is a breeding ground for bias and a hotbed for radicalization.

Given these circumstances, public awareness is crucial. Many digital platforms have started incorporating detection tools, but their effectiveness remains a subject of debate. The risk of manipulation through AI-generated text and images continues to undermine the very core of democratic values. Safeguarding freedom of expression while limiting potential harms has become one of the 21st century’s most daunting challenges. In addressing these issues, we can’t lose sight of the broader implications for human rights and the integrity of public discourse.

Also Read: Role of Artificial Intelligence in Transportation.

Emotional Manipulation: AI’s Impact on Perception and Bias

AI has a formidable capacity for emotional manipulation, affecting human behavior and perceptions of reality. Machine learning techniques in social media algorithms create echo chambers that intensify existing biases. The average person might not be aware of this manipulation, leading to psychological harm over time. Social media companies profit from this state of affairs, as more engagement means more data and higher ad revenues. These digital platforms wield machine learning capabilities that prioritize addictive content over public discourse, gradually eroding the distinction between genuine emotion and artificial stimulation.

Also Read: Dangers Of AI – Dependence On AI

Privacy Concerns in Data Mining and Targeted Messaging

AI-generated content invades our privacy in insidious ways. Social media accounts, managed by bad actors with advanced machine learning models, collect data on consumer behavior. This data forms the backbone of targeted messaging campaigns, especially during national elections. Malign actors and malicious detection tools exploit this information, posing serious risks to freedom of expression and human rights. Public figures become easy targets for deepfakes, AI-generated faces, and fake news stories, damaging their reputations and influencing public opinion. Such invasive tactics represent the biggest danger in our digital age, where the line between public and private continually blurs.

Automated Decision-Making: A Breeding Ground for Bias

Automated systems, underpinned by machine learning models, are increasingly used to make decisions affecting people’s lives. While efficient, these systems often harbor biases found in the data used to train them. Decisions related to healthcare, law enforcement, and even job recruitment are now made by algorithms, with human oversight gradually diminishing. Social media algorithms, for instance, have been critiqued for perpetuating systemic biases.

The potential harms become glaringly evident when one considers how these biases can shape human behavior and consumer choices. Even worse, algorithms can encode these biases into future decision-making models, perpetuating a vicious cycle. Diligence in auditing these machine learning algorithms for potential risks and biases is essential to ensuring ethical deployment.

Also Read: Democracy will win with improved artificial intelligence.

Loss of Critical Thinking Is Misinformation’s Gain

Artificial intelligence tools are revolutionizing the way we consume information. Algorithms curate personalized content, designed to keep us scrolling. While this customization may seem convenient, it comes at a steep price: the decline of critical thinking. Social media platforms leverage machine learning algorithms to feed us what they think we want to see. These platforms analyze our clicks, likes, and time spent on different posts.

Based on this data, the algorithm makes assumptions about our preferences. It shows us content that aligns with our existing beliefs and interests. This personalized approach may increase engagement, but it also reinforces our existing views. A closed information loop is created, where dissenting opinions are filtered out. This lack of diverse perspectives compromises our ability to think critically.

Bad actors exploit this system. They use AI-generated synthetic media to disseminate fake news stories, manipulated images, and even deepfakes. These fraudulent creations are almost indistinguishable from authentic content. The average person, already lulled into a false sense of security by a feed full of affirming content, becomes an easy target. Malicious actors weaponize AI tools to alter perceptions of reality, pushing polarizing narratives.

This situation challenges our cognitive freedom. The freedom to think, to assess, and to arrive at conclusions is vital for a functioning democracy. Yet, here we are, caught in a web of misinformation. The landscape is so saturated with deceptive material that discerning the truth becomes an uphill battle. Our weakened critical thinking skills make us vulnerable, endangering public discourse and democratic processes. We must acknowledge the gravity of this issue. Stringent regulations, public education, and transparent algorithms are the first steps in reclaiming our cognitive liberty and ensuring a healthy public discourse.

Corporate Interests: AI for Profit Over Truth

Social media companies focus on engagement metrics at the expense of truth. They use algorithms to maximize ad revenue and user time. These algorithms often highlight sensational but false content. Profit becomes the ultimate goal, overshadowing truth. These companies use data to target messages precisely. Their focus on profit bypasses ethical concerns, fueling misinformation and manipulation.

This focus on profit over ethics creates a fertile ground for misinformation. It goes beyond misleading individuals; it damages informed public dialogue. Social media platforms use machine learning to target questionable content at vulnerable audiences. This fosters distrust and division. These corporate practices sacrifice ethics for profit, eroding societal cohesion and integrity. The stakes are high, affecting not just individual users but society as a whole.

Also Read: How Much of a Threat is Artificial Intelligence to Artists?

Conclusion: Safeguarding Truth in the Age of AI Manipulation

In this digital era, protecting the truth has become a Herculean task. The sophistication of AI-generated content, the erosion of critical thinking, and the corporate motives that prioritize profit over ethical concerns all contribute to an increasingly manipulated public. The integrity of democratic processes, human rights, and public awareness are at stake. A multi-pronged approach involving stricter regulations, public education, and technological innovation is required to defend against these 21st-century threats. While AI presents a wide range of applications and benefits, caution and ethical considerations must guide its development and deployment.

References

Bonaccorso, Giuseppe. Machine Learning Algorithms. Packt Publishing Ltd, 2017.

Molnar, Christoph. Interpretable Machine Learning. Lulu.com, 2020.

Suthaharan, Shan. Machine Learning Models and Algorithms for Big Data Classification: Thinking with Examples for Effective Learning. Springer, 2015.