Introduction

Can you learn everything you need to know about machine learning techniques within a book that only spans one hundred pages? This is the ambitious goal of The Hundred-Page Machine Learning Handbook by Andriy Burkov. But does this introduction to machine learning deliver? Here is our verdict.

Table of contents

- Introduction

- The Hundred-Page Machine Learning Book

- Who is the book for?

- Chapter wise breakdown of The hundred page machine learning book.

- 9 Reasons why you should read the hundred page machine learning book?

- Basic Practice

- Machine Learning, Neural Networks, and Deep Learning

- About the Author

- 8 Reasons you should buy the hundred page machine learning book.

- Conclusion

Also Read: Top 10 Artificial Intelligence Books for Beginners in 2022.

The Hundred-Page Machine Learning Book

Written by an experienced practitioner of machine learning, this book aims to introduce readers to core concepts of artificial intelligence (AI) and machine learning (ML) in an accessible manner. Industry experts believe that the book is a success. Amazon’s Head of Data Science, Karolis Urbonas calls it a “great introduction to machine learning from a world-class practitioner.” eBay’s Head of Engineering, Sujeet Varakhedi, and LinkedIn’s VP of AI, Deepak Agarwal agree.

As far as books on machine learning go, this is an excellent book and a solid introduction to the field, including key concepts in machine learning. It is also written in an easy-to-understand manner that makes it possible to read in a single sitting.

Who is the book for?

In short, everyone. Machine learning algorithms and artificial intelligence are becoming part of almost everyone’s personal and professional lives. Understanding major machine learning approaches will help not only engineers embrace emerging technologies.

This book on machine learning is also an excellent resource for anyone who wants to learn or understand machine learning. The Hundred Page Machine Learning book covers science concepts, statistical concepts, and practical concepts of ML, all the while remaining readable for those without programming experience. Let us understand this book chapter wise.

Also Read: Working with AI: Real Stories of Human-Machine Collaboration

Chapter wise breakdown of The hundred page machine learning book.

Chapter 1: The Machine Learning Landscape

This chapter sets the stage by introducing the fundamental concepts and terminologies of machine learning. It adeptly outlines the scope of machine learning, distinguishing between different types of learning algorithms. The review praises the chapter for its clear definitions and engaging introduction to the vast landscape of machine learning, making it an excellent starting point for beginners.

This chapter starts with a compelling example comparing traditional programming to machine learning, using the scenario of email spam detection. It illustrates how machine learning shifts the approach from writing explicit rules to learning the rules from data. This example effectively sets the stage for understanding the transformative power of machine learning.

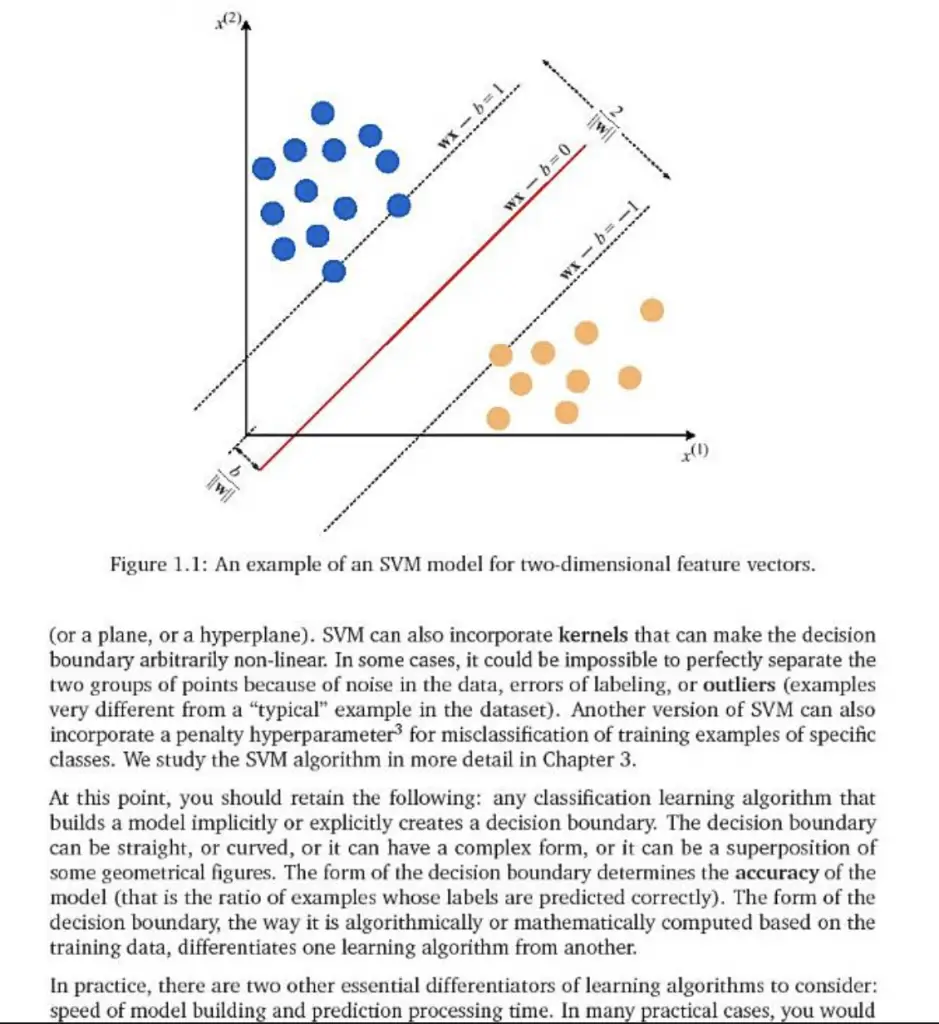

Chapter 2: Supervised Learning

Focusing on supervised learning, this chapter dives into algorithms that learn from labeled data. It’s commended for its succinct explanations and practical examples that illuminate the theory behind supervised learning techniques. The chapter effectively demystifies complex algorithms, making them accessible to readers with limited mathematical background.

The chapter introduces supervised learning through the example of predicting house prices based on features like size and location. It simplifies the concept of regression, making it relatable by showing how a model can be trained to make predictions based on input data. This practical example demonstrates the direct application of supervised learning in real estate valuation.

Chapter 3: Unsupervised Learning

Here, Burkov explores the intriguing world of unsupervised learning, where algorithms infer patterns from unlabeled data. The chapter is praised for its clear exposition of concepts such as clustering and dimensionality reduction. It skillfully demonstrates the applications and challenges of unsupervised learning, providing readers with a comprehensive understanding of its potential and limitations.

An example used in this chapter involves clustering customers based on their purchasing behavior without predefined labels. This scenario showcases how unsupervised learning can identify patterns and groupings in data, providing insights into customer segmentation. It’s a clear, real-world application that highlights the utility of unsupervised learning in marketing strategies.

Chapter 4: Other Forms of Learning

This chapter broadens the reader’s perspective by covering semi-supervised, reinforcement, and transfer learning. It is lauded for its ability to succinctly explain these less traditional forms of learning, showcasing the diversity and adaptability of machine learning methods. The examples provided illuminate the practical applications of these learning paradigms, enhancing the reader’s appreciation of the field’s depth.

The exploration of reinforcement learning is illustrated through the example of a robotic arm learning to pick up objects. By receiving feedback in the form of rewards or penalties, the robot incrementally improves its performance. This example vividly shows the trial-and-error learning process inherent in reinforcement learning and its potential for robotics applications.

Chapter 5: Neural Networks and Deep Learning

Burkov demystifies the hype surrounding neural networks and deep learning, presenting them in an understandable and approachable manner. This chapter is highlighted for its clarity in explaining the architecture of neural networks and the principles of deep learning. It serves as a solid introduction to one of machine learning’s most dynamic areas, making complex concepts accessible without oversimplification.

To demystify neural networks, the chapter presents the example of handwriting recognition using deep learning. It explains how layers of a neural network work together to interpret handwritten digits, providing a step-by-step breakdown of the learning process. This example conveys the power of deep learning in processing complex, high-dimensional data like images.

Chapter 6: The Math of Machine Learning

Acknowledging the mathematical underpinnings of machine learning, this chapter delves into the essential mathematics required to understand machine learning algorithms. It receives praise for making potentially intimidating topics like linear algebra and probability theory approachable, offering a gentle introduction to the mathematical concepts that form the backbone of machine learning techniques.

A notable example in this chapter involves using linear regression to fit a line through data points representing house sizes and prices. The mathematical explanation of calculating the best-fit line offers a tangible understanding of how algorithms minimize error and make predictions. This example solidifies the reader’s understanding of the mathematical principles behind machine learning models.

Chapter 7: Machine Learning in Practice

The book concludes with a practical guide to implementing machine learning models. This chapter is commended for providing a roadmap to deploying machine learning solutions, covering everything from data preprocessing to model evaluation. It offers valuable insights into the practical challenges and considerations in machine learning projects, equipping readers with the knowledge to apply their learning in real-world scenarios.

The chapter culminates with a detailed example of developing a machine learning model to predict customer churn. It covers data collection, preprocessing, feature engineering, model selection, training, and evaluation. This comprehensive example encapsulates the end-to-end process of applying machine learning, emphasizing the practical challenges and considerations in deploying models.

9 Reasons why you should read the hundred page machine learning book?

Reading “The Hundred Page Machine Learning Book” by Andriy Burkov offers several compelling benefits for anyone interested in machine learning, whether you’re a beginner or an experienced practitioner seeking a concise refresher. Here are key reasons why this book is a valuable read:

- Efficient Learning: The book distills complex machine learning concepts into digestible explanations. Its concise nature means you can quickly grasp the essentials of machine learning without getting bogged down by unnecessary detail.

- Practical Examples: Through real-world examples and case studies, the book demonstrates the practical applications of machine learning across various industries. These examples not only enhance understanding but also inspire ideas for applying machine learning in new ways.

- Authoritative Source: Andriy Burkov brings a wealth of experience from his career in machine learning and data science. His insights provide readers with practical knowledge that combines theoretical foundations with industry applications.

- Accessible to All Levels: Whether you’re new to the field or have some experience, the book is designed to be accessible. It starts with foundational concepts and builds up to more advanced topics, making it suitable for readers at different levels of expertise.

- Mathematical Foundations Explained: For those interested in the mathematical underpinnings of machine learning algorithms, the book provides a clear explanation of the essential mathematics in an approachable manner, ensuring you’re not left behind due to the math.

- Comprehensive Coverage: Despite its brevity, the book covers a wide range of topics within machine learning, from supervised and unsupervised learning to neural networks and deep learning, giving readers a broad overview of the field.

- Practical Guidance: The final chapters focus on implementing machine learning models, offering practical advice on everything from data preprocessing to model evaluation. This guidance is invaluable for anyone looking to apply machine learning in real-world projects.

- Time-efficient Investment: Given its concise format, the book represents a time-efficient investment in your education or professional development. You can gain a solid understanding of machine learning fundamentals without the need to commit to a longer, more detailed text.

- Encourages Further Exploration: The book serves as a springboard into the vast world of machine learning, encouraging further exploration. It lays the groundwork for deeper study and application of more advanced machine learning techniques and concepts.

Also Read: Top 26 Best Books On AI For Beginners 2023.

Basic Practice

This part of the book allows you to apply what you have learned so far, starting with feature engineering.

It covers one-hot encoding, binning, and normalization, among other topics. Some of the most valuable pages here show you how to assess the effectiveness of your algorithm. Loss functions are a great approach to that. They evaluate how well an algorithm models a data set. Using loss functions will allow ML engineers to quickly choose the best algorithm for the task at hand.

Objective functions are another means of evaluating the effectiveness of an algorithm. Applying an objective function could include comparing a potential solution to a set of training data. The outcome may be the loss of the model if it underperforms. Alternatively, the outcome may be pointing toward the need for additional training.

In addition, the author covers the use of distance functions, activation functions, cost functions, and statistical notations in this part of the book.

Machine Learning, Neural Networks, and Deep Learning

The hundred page machine learning book provides a comprehensive introduction to the future of our current range of machine learning.

In this book, Burkov describes feed-forward neural networks and the use of threshold functions in them. He also writes about advances in deep learning. One of the topics covered is transfer learning, where ML engineers use a pre-trained model on a new task. Thanks to the existing output of base models, it becomes easier to train an algorithm for a new task. Burkov also covers the use of gradient descent as an optimization algorithm used to train neural networks.

About the Author

Andriy Burkov is a distinguished figure in the field of machine learning and artificial intelligence, with an impressive blend of academic achievement and industry experience. Holding a Ph.D. in Machine Learning, Burkov has dedicated a significant portion of his career to advancing the frontiers of AI research and application. Burkov’s work is characterized by a deep understanding of both the technical intricacies and the broader implications of machine learning technologies. His contributions extend beyond his book, including numerous scholarly articles and participation in leading AI conferences.

Burkov’s commitment to demystifying AI and making machine learning accessible to a wider audience reflects his passion for the field and his belief in the transformative power of AI technologies. Through his writings and professional endeavors, Andriy Burkov continues to influence both aspiring learners and seasoned practitioners, cementing his status as a respected voice in the machine learning community.

8 Reasons you should buy the hundred page machine learning book.

Purchasing “The Hundred Page Machine Learning Book” by Andriy Burkov is a wise decision for several compelling reasons, especially for those eager to delve into the world of machine learning (ML). Here are the key factors that make this book a valuable acquisition:

- Conciseness and Clarity: Burkov has masterfully condensed complex ML concepts into a concise format without sacrificing depth or clarity. This makes the book an efficient resource for learning, saving time while ensuring a comprehensive understanding of key ML principles.

- Expertise of the Author: Andriy Burkov’s extensive experience in ML and AI, both in academia and the industry, ensures that the insights provided are not only theoretically sound but also practically relevant. His expertise offers readers a trustworthy guide through the often complex landscape of machine learning.

- Practical Application: The book is not just theoretical; it includes practical examples and case studies that illustrate how ML concepts are applied in real-world scenarios. This aspect is invaluable for learners who wish to see how theoretical knowledge translates into practical solutions.

- Accessible to a Broad Audience: Whether you are a beginner with no prior knowledge of ML or a professional seeking to refresh your understanding, the book caters to a wide range of readers. Its clear and approachable style makes complex topics accessible to everyone.

- Foundational and Up-to-Date: The content covers foundational aspects of machine learning while also touching upon contemporary topics and trends in the field. This ensures that readers gain a solid base as well as insights into the current and future directions of ML research and applications.

- Encourages Further Learning: For those inspired to explore beyond the basics, the book serves as a springboard into more advanced study. It lays down the groundwork and motivates readers to pursue further learning and specialization in areas of interest within machine learning.

- Efficient Learning Resource: In a field as vast as machine learning, having a resource that effectively distills essential concepts into a digestible format is invaluable. This book represents an efficient use of your time and effort, maximizing learning while minimizing confusion and overwhelm.

- High Return on Investment: Considering the potential career benefits and advancements in understanding that come from mastering ML concepts, purchasing this book represents a high return on investment. The knowledge gained can open doors to new opportunities in tech-driven industries and research fields.

Conclusion

“The Hundred Page Machine Learning Book” by Andriy Burkov emerges as an indispensable resource for anyone keen on exploring or advancing in the field of machine learning. Its concise yet comprehensive approach demystifies complex concepts, making the vast and often intimidating world of ML accessible to a broad audience. The book’s strength lies in its ability to blend theoretical foundations with practical applications, guided by Burkov’s extensive expertise. It serves not only as an introductory guide for beginners but also as a valuable refresher for seasoned professionals.

By providing a clear pathway through the intricacies of machine learning, Burkov’s work encourages further exploration and learning, underscoring the transformative potential of ML technologies in various domains. Purchasing this book represents an investment in knowledge, offering a high return in terms of understanding and applying machine learning principles effectively. Whether you’re looking to kickstart a career in AI, enhance your current skill set, or simply gain a deeper understanding of one of today’s most dynamic technological fields, “The Hundred Page Machine Learning Book” is a compelling choice that promises to deliver profound insights and practical knowledge in an efficient and engaging manner.