Introduction

The Elbot chatbot is a revolutionary artificial intelligence entity that has been making significant strides in the field of AI. It’s an award-winning chatbot developed by Artifical Solutions and has been applauded for its conversational abilities and human-like interactions. What sets Elbot apart from other chatbots is its remarkable ability to understand context and make witty and humorous responses, often incorporating irony and sarcasm just like a human would.

This distinctive feature is made possible by a sophisticated AI technology known as Natural Language Understanding (NLU), which allows Elbot to comprehend and respond to user inputs in a way that feels genuinely conversational and engaging. Its innovative design, coupled with its smart learning and interacting capabilities, make Elbot one of the most impressive chatbots in the AI landscape.

Table of contents

What is Conversational AI?

As the name implies, Conversational AI can be understood as literally ‘talking’ with a machine. How does that differ from interacting via code, after all isn’t writing complex logical functions the same as speaking with a machine? Well the difference is in the artificial intelligence part where our conversation with the machine is in our own natural language, like English, and the Conversational AI can respond to us.

The official expert accepted definition of Conversation AI is the application of machine learning to develop language-based apps that allow humans to interact naturally with devices, machines, and computers using speech.

We already use conversational AI in our daily lives, maybe you have asked an Alexa to give you an update on the news, or asked a chatbot for assistance while filling an online order. You speak in your normal voice or type in English and the device understands, finds the best answer, and replies with speech or text that sounds natural.

Conversational AI applications come in several forms. Simple FAQ bots, which search a database to respond to queries, count as they still need to interpret your query and match it to the best answer. More complex forms of conversational AI are the virtual personal assistants like Amazon’s Alex or Apple’s Siri which can understand the nuances of voice commands and carry out complex requests.

Conversational AI is an essential building block of human interactions with intelligent machines and applications. None of the fascinating new technologies or gadgets are possible without getting computers to understand human languages, with all their hidden rules and awkward complexity. In the last few years, deep learning has improved the state-of-the-art in conversational AI and offered superhuman accuracy on certain tasks. Deep learning has also reduced the need for deep knowledge of linguistics and rule-based techniques for building language services, which has led to widespread adoption and the birth of the so called ‘Super’ chatbots. One such chatbot being Elbot, the supposed genius chatbot.

Also Read: How to Make an AI Chatbot – No Code Required.

What is Elbot?

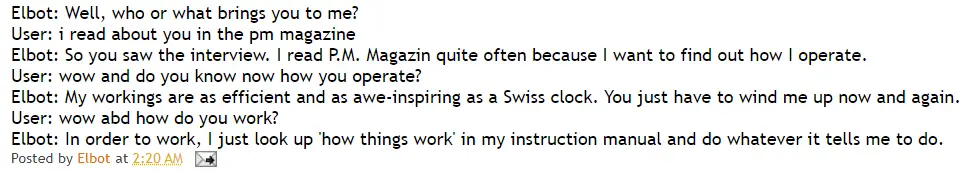

Elbot is a Conversational AI chatbot that was created early in 2001 by Fred Roberts as a modified version of the basic retrieval chatbot Teneo. The idea Roberts had was to program Elbot with some ‘wiggle’ to produce human-like responses to input it gets from the users. The chatbot is open source and anyone can converse with it at www.elbot.com. If you were able to converse with Elbot, know that it has now learned from your experience! Elbot constantly tries to make itself better by adding the data from dictionaries, address books, instructions manuals, and much more to its database so that it can produce an acceptable response in any given situation.

If you tried having a conversation with Elbot, you may have noticed it make the occasional sarcastic or snide comment. This trait is what separates Elbot from other chatbots and Conversational AI products. Because of this, the chatbot has won awards in the Chatterbox Challenge for public popularity, most humorous and most knowledgeable categories. It was even the winner of the Loebner Prize in 2008, one of the most prestigious international competitions for artificial intelligence. It was so good that it convinced 3 of the 12 judges that it was indistinguishable from humans in conversational skills, nearly passing the famous Turing Test for machine intelligence! Thankfully, Elbot is not yet sentient, or many comedians would be looking for a new job.

Currently, the bot is owned by the company Artificial Solutions and is being used as a tool to study the psychology of human-machine behavior.

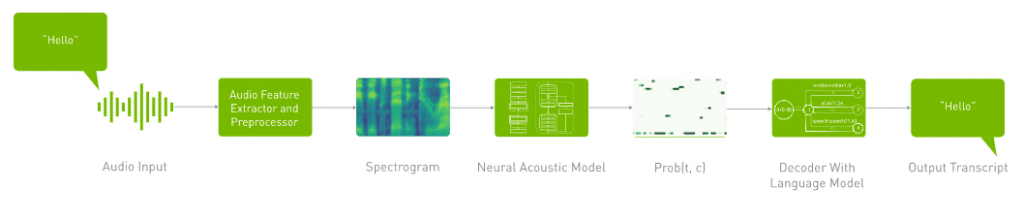

What makes Elbot so smart?

Elbot relies on breakthroughs in natural language understanding (NLU) algorithms that can take text examples as input, understand context and intent, and return an intelligent response. Deep learning models are applied for NLU because of their ability to accurately generalize over a range of contexts and languages. Transformer deep learning models, such as BERT (Bidirectional Encoder Representations from Transformers), are an alternative to recurrent neural networks that applies an attention technique. This attention technique parses a sentence and focuses ‘attention’ on the most relevant words that come before or after the sentence. The BERT model has revolutionized Elbot’s ability to offer querying and response accuracies comparable to human testers for Q&A, intent recognition, and sentiment analysis among other things.

Also Read: How Can We Make Chatbots Intelligent?

The technology behind conversational AI is complex, involving a multi-step process that requires a massive amount of computing power and computations that must happen in less than 300 milliseconds in order to deliver a great user experience. Elbot responds to this challenge by leveraging a GPU. GPUs or graphical processing units are a processor composed of hundreds of cores that can handle thousands of threads in parallel. Transformer-based deep learning models like BERT don’t require sequential data to be processed in order, allowing for much more parallelization and reduced training and execution time on GPU. The combination of novel NLU algorithms and advancements in processing power makes Elbot one of the leading chatbots in the industry.

Conclusion

The Elbot chatbot represents a significant leap forward in the field of AI conversational agents. Its smart and witty communication style, powered by advanced Natural Language Understanding technology, sets it apart from traditional chatbots, making it more engaging and human-like. Its ability to understand context and respond with humor and sarcasm offers a unique conversational experience, demonstrating how AI technology can be integrated into our everyday lives in an enjoyable and productive way. As we continue to embrace AI advancements, chatbots like Elbot serve as a testament to the potential of AI in enhancing human-machine interactions and paving the way for more sophisticated conversational agents in the future