Introduction

Machine learning is a subfield of artificial intelligence, which is defined as the capability of a machine to simulate intelligent human behavior and to perform complex tasks in a manner that is similar to the way humans solve problems.

To understand machine learning, you need to know the algorithms that drive the opportunities of machine learning and it’s limitations.

In general, machine learning algorithms are used in a wide range of applications, like fraud detection, computer vision, autonomous vehicles, predictive analytics where it is not computationally feasible to develop conventional algorithms that meet the requirements of real time and predictive nature of work.

There are three basic functions of machine learning algorithms –

Descriptive – Explaining with the help of data

Predictive – Predicting with the help of data

Prescriptive – Suggesting with the help of data

We understand the functions of machine learning from the information above, but the art of making these functions work is in the way in which the algorithms are designed and used to execute these functions.

Table of contents

- Introduction

- Types of Machine Learning Algorithms

- Learning Based Machine Learning Algorithms

- Machine Learning Algorithm Based On Functions

- IMAGE

- What machine learning algorithms can you use?

- What are the most common and popular machine learning algorithms?

- K Means Clustering Algorithm (Unsupervised Learning – Clustering)

- Support Vector Machine Algorithm (Supervised Learning – Classification)

- Linear Regression (Supervised Learning/Regression)

- Logistic Regression (Supervised learning – Classification)

- Artificial Neural Networks (Reinforcement Learning)

- Decision Trees (Supervised Learning – Classification/Regression)

- Random Forests (Supervised Learning – Classification/Regression)

- Nearest Neighbors (Supervised Learning)

- Conclusion

- References

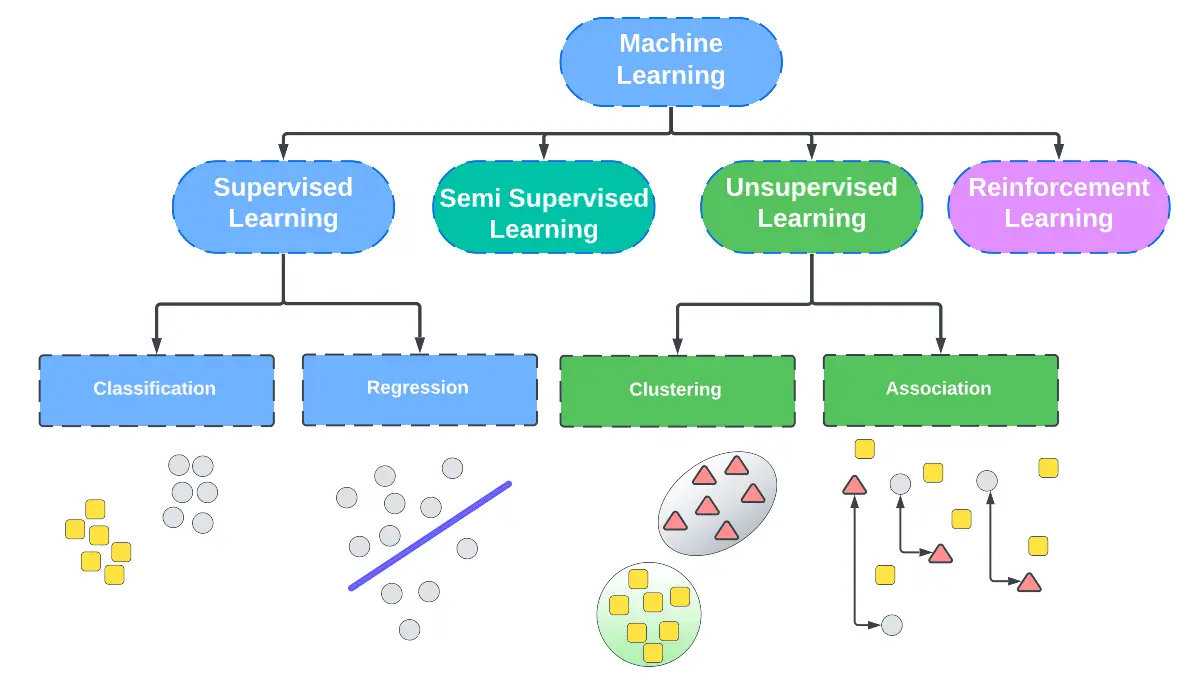

Types of Machine Learning Algorithms

In the field of machine learning, there are multiple algorithms that help us reach descriptive, predictive, and prescriptive result sets based on parameters defined. Let’s learn what these algorithms are in the next section –

Learning Based Machine Learning Algorithms

These algorithms, learn based on information provided and expected results. There are many ways in which these algorithms fuel self learning.

Semi Supervised Learning

Unsupervised Learning

Reinforcement Learning

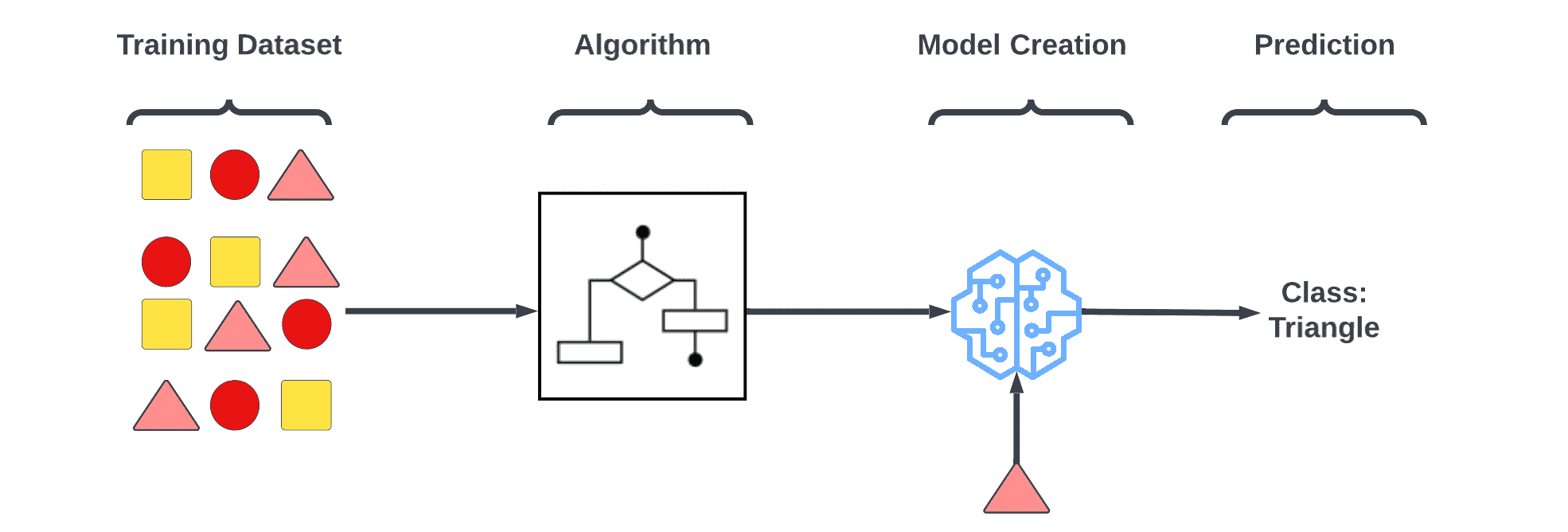

Supervised Learning

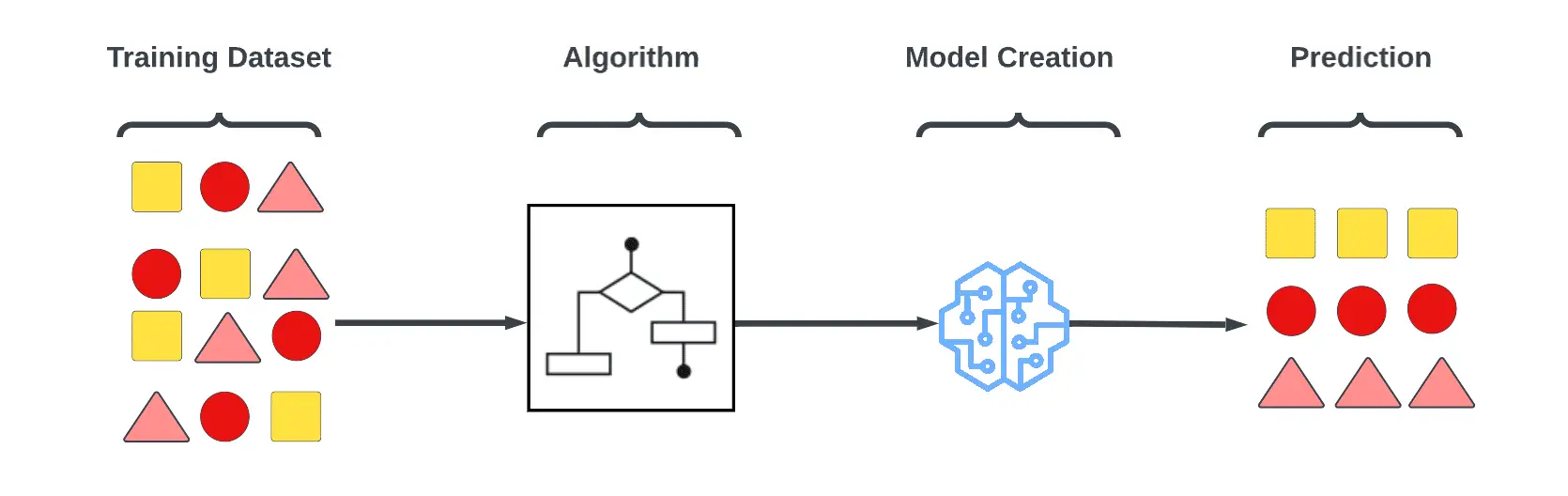

Machines are taught by example in supervised learning. As an operator provides the machine learning algorithm with a known dataset with desired inputs and outputs, it must determine how to arrive at the inputs and outputs. Unlike operators who know the correct answers to problems, algorithms identify patterns in data, make predictions based on observations, and learn from them. Until the algorithm achieves a high level of accuracy/performance, it makes predictions and is corrected by the operator.

Under the umbrella of supervised learning fall:

Classification: Observed values are used to draw conclusions about new observations and determine which category they belong to in classification tasks. When a program filters emails as ‘spam’ or ‘not spam’, it must analyze existing observational data to determine which emails are spam or not spam.

Regression: In regression tasks, the learning machine must estimate and understand the relationships between variables in a system by analyzing only one dependent variable, as well as a number of other variables that are constantly changing. Regression analysis is particularly useful for forecasting and prediction.

Forecasting: Forecasting involves analyzing past and present data to make predictions about the future.

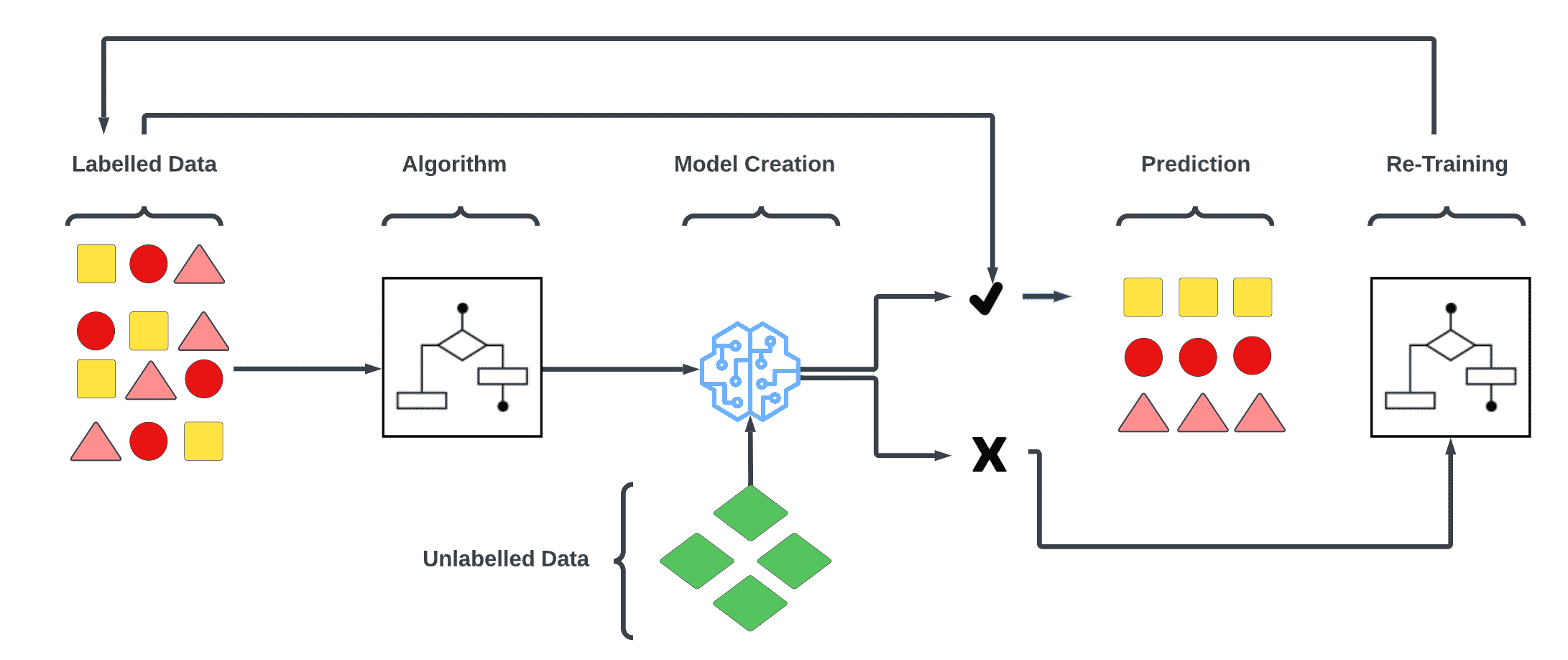

Semi – Supervised Learning

A semi-supervised learning system is similar to a supervised learning system, but it uses both labeled and unlabeled data in addition to supervised data. The term labeled data refers to information that has a meaningful tag that allows the algorithm to understand the data, whereas unlabeled data does not have such a tag, which means that machine learning algorithms can be taught to label data that has not been labeled.

Semi-supervised learning involves few components.

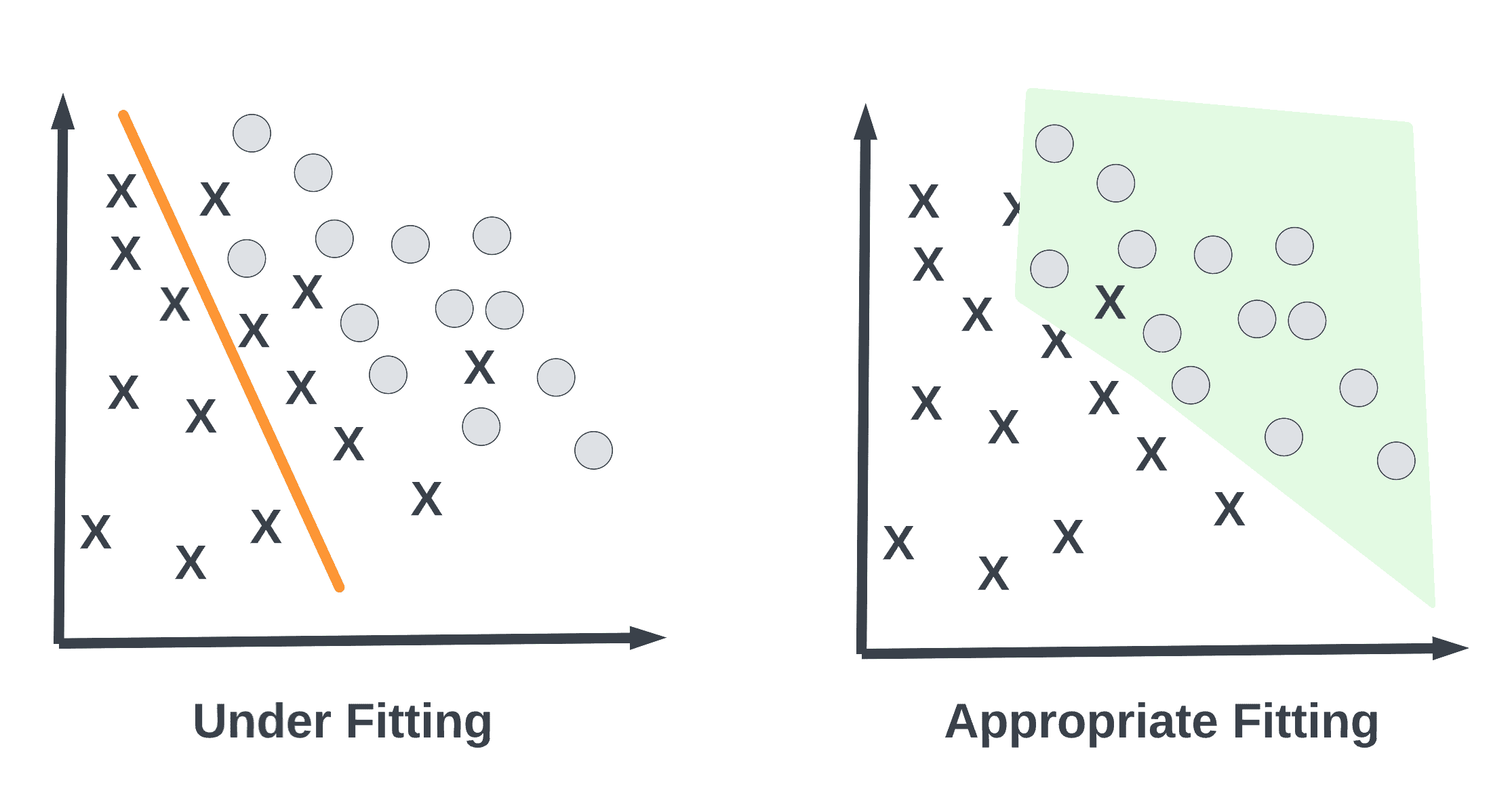

(Classification or Regression Model) – The above figure shows a classification model. It is first trained with labelled data.

Several unlabeled data sets are provided to the classifier after it has been trained on the labelled data. Upon classifying the unlabeled data, the model is further retrained using the originally available labelled data to increase the accuracy of the model.

Example of Semi-supervised algorithm includes the classification and regression problems.

Unsupervised Learning

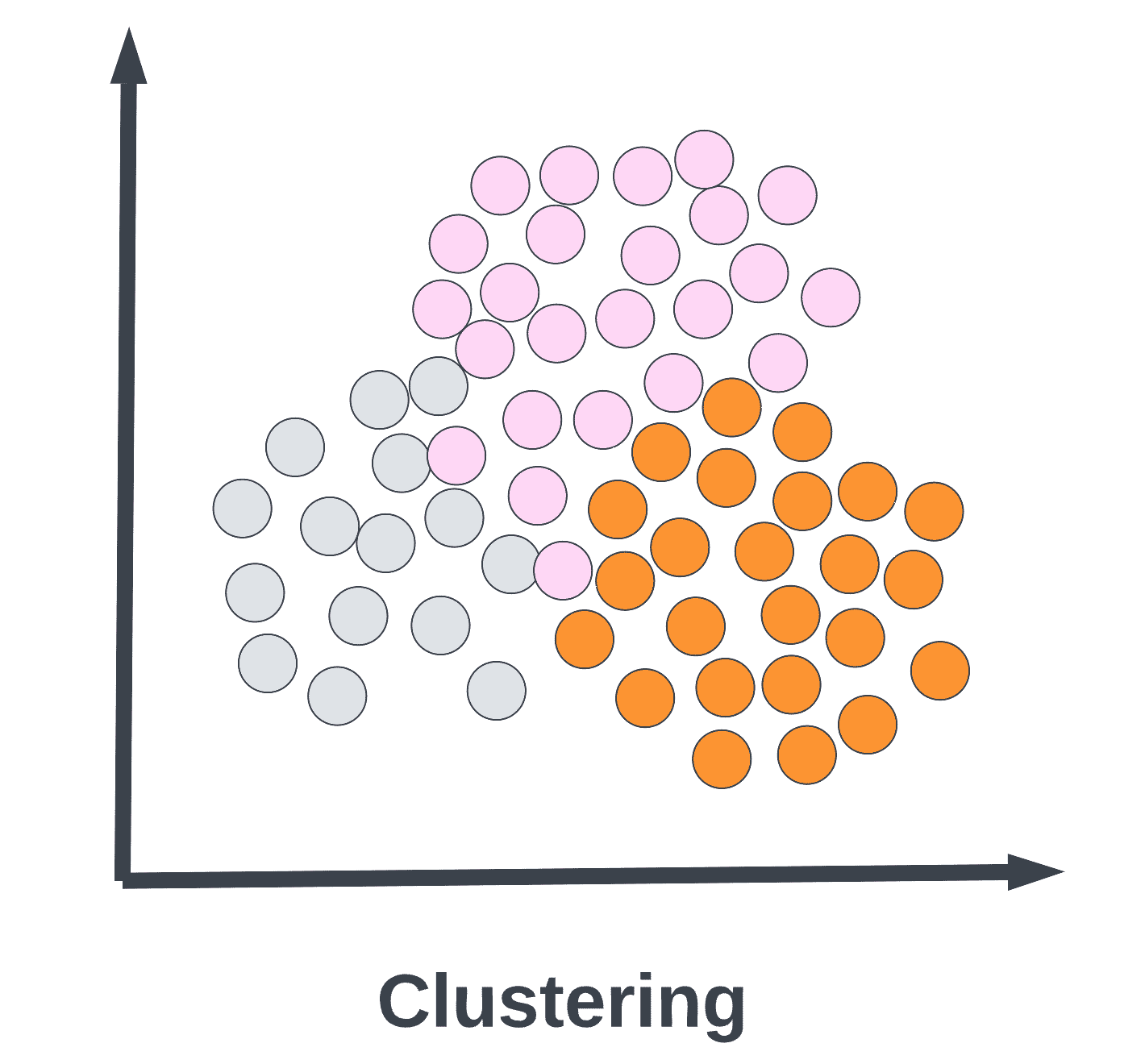

It is possible to identify patterns using the machine learning algorithm, without using an answer key or an operator to provide instructions. Instead, the machine analyzes available data in order to determine correlations and relationships. It is left up to the machine learning algorithm to interpret large data sets and address them accordingly in an unsupervised learning environment. The algorithm tries to organize the data in a manner that describes the data’s structure. The data might be grouped into clusters or arranged in a more organized manner.

As it assesses more data, its ability to make decisions on that data gradually improves and becomes more refined.

The following fall under the unsupervised learning category:

Clustering: A clustering technique involves grouping similar data (based on defined criteria). It is useful for segmenting data and finding patterns in each group.

Dimension reduction: In order to find the exact information, dimension reduction reduces the number of variables considered.

Association rule mining: Discovering relationships between seemingly independent databases or other data repositories through association rules.

Example of Unsupervised algorithm is K-Means clustering, the Apriori algorithm.

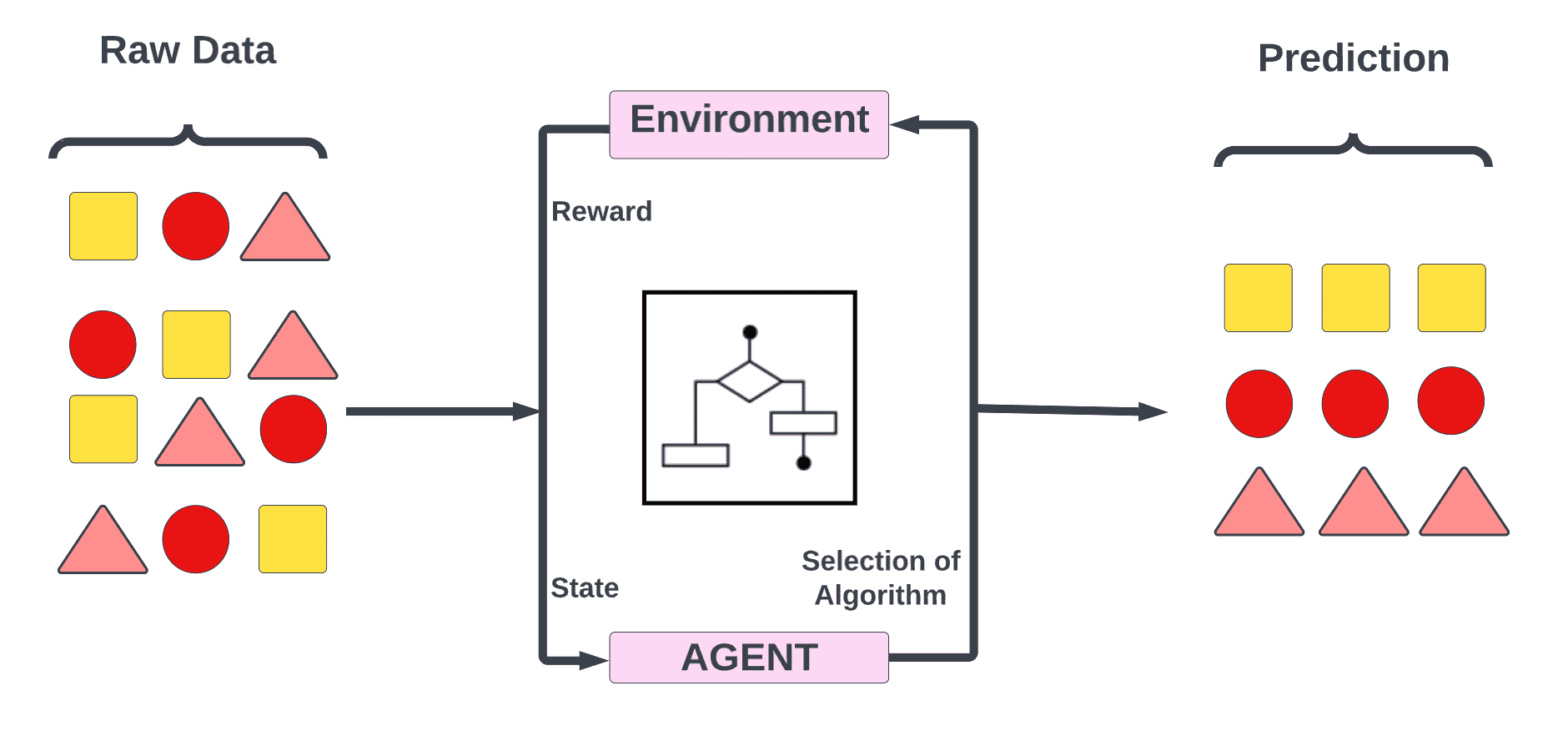

Reinforcement Learning

In reinforcement learning, a set of actions, parameters and end values are provided to a machine learning algorithm for use in regimented learning processes. As soon as the rules are defined, the machine learning algorithm will explore a variety of options and possibilities, monitoring and evaluating each result to determine which one is the best. Machines learn by trial and error using reinforcement learning. Adapting its approach to the situation based on previous experiences helps it achieve the best outcome.

Machine Learning Algorithm Based On Functions

As a result of grouping by function, we are able to consolidate the different Machine Learning Algorithms based on their method of operation. Regression algorithms can be divided into many different types, but we are going to group them together under one umbrella of the Regression Algorithms here.

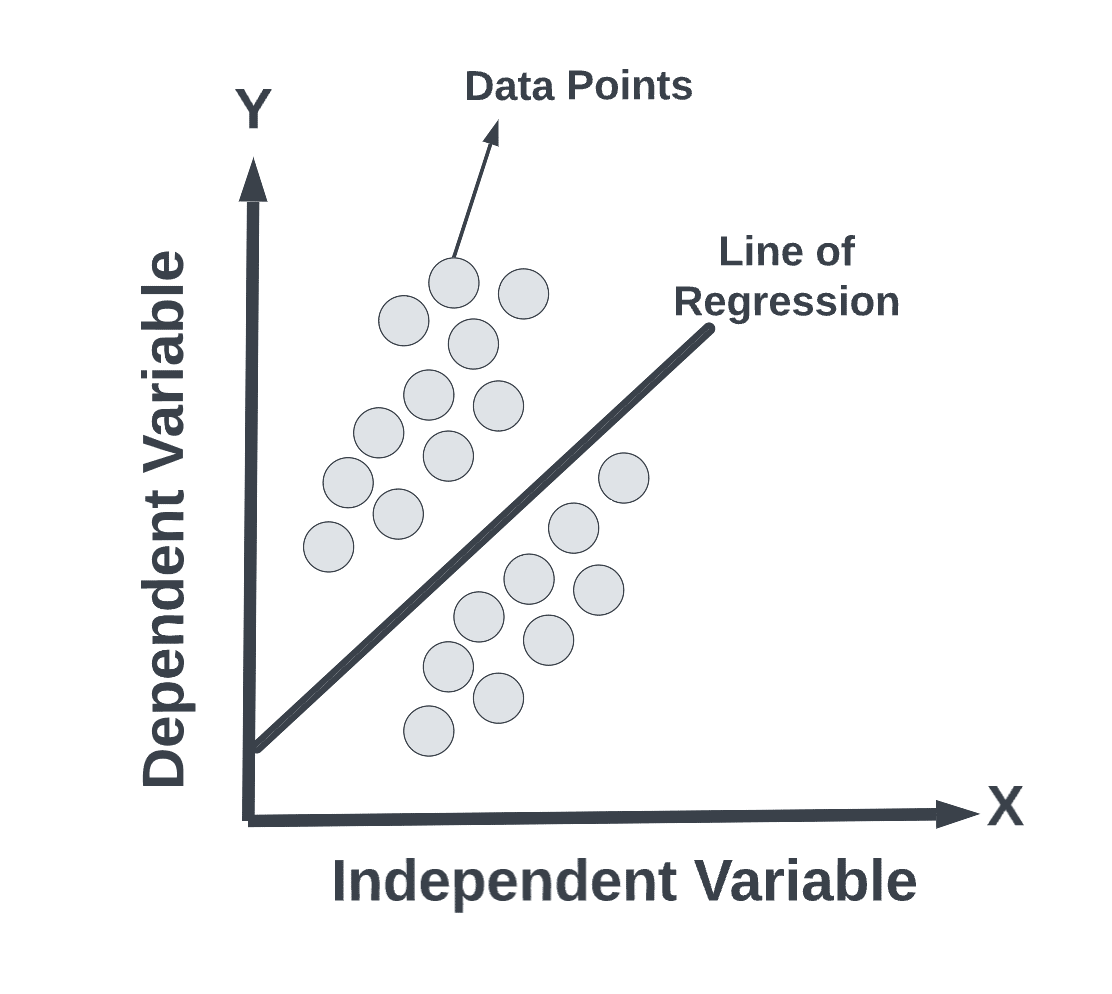

Regression Algorithms

As the name implies, regression analyses are designed to estimate the relationship between an independent variable (features) and a dependent variable (label). A linear regression is the method that is most widely used in regression analysis.

Some of the most commonly used Regression Algorithms are:

2. Logistic Regression

3. Ordinary Least Squares Regression (OLS)

4. Stepwise Regression

5. Multivariate Adaptive Regression Splines (MARS)

6. Locally Estimated Scatter Plot Smoothing (LOESS)

7. Polynomial Regression

Instance Based Algorithm

In Machine Learning, generalization is the ability of a model to perform well on new data instances that have not been seen before. In most cases, the main goal of a Machine Learning model is to make accurate predictions. In fact, the true goal is to perform well on new instances that have never been seen before, rather than just performing well on trained data.

A Generalization approach can be divided into two broad categories: Instance based learning and model based learning.

As well as being known as instance-based learning, memory-based learning is also a machine learning approach in which, instead of comparing new instances of data with the ones that were observed and learned during training, the algorithm compares these new instances of data with the ones that were seen/learned during training.

Algorithms that are commonly used in instance-based learning include the following:

1. k-Nearest Neighbor(kNN)

2. Decision Tree

3. Support Vector Machine (SVM)

4. Self-Organizing Map (SOM)

5. Locally Weighted Learning (LWL)

6. Learning Vector Quantization (LVQ)

Regularization Based Algorithm

The regularization technique is a type of regression in which the coefficient estimates are penalized towards zero, helping prevent the model from acquiring a complex and flexible model, thus avoiding overfitting in some situations.

Some commonly used regularization algorithms are:

1. Ridge Regression

2. Lasso (Least Absolute Shrinkage and Selection Operator) Regression

3. ElasticNet Regression

4. Least-Angle Regression (LARS)

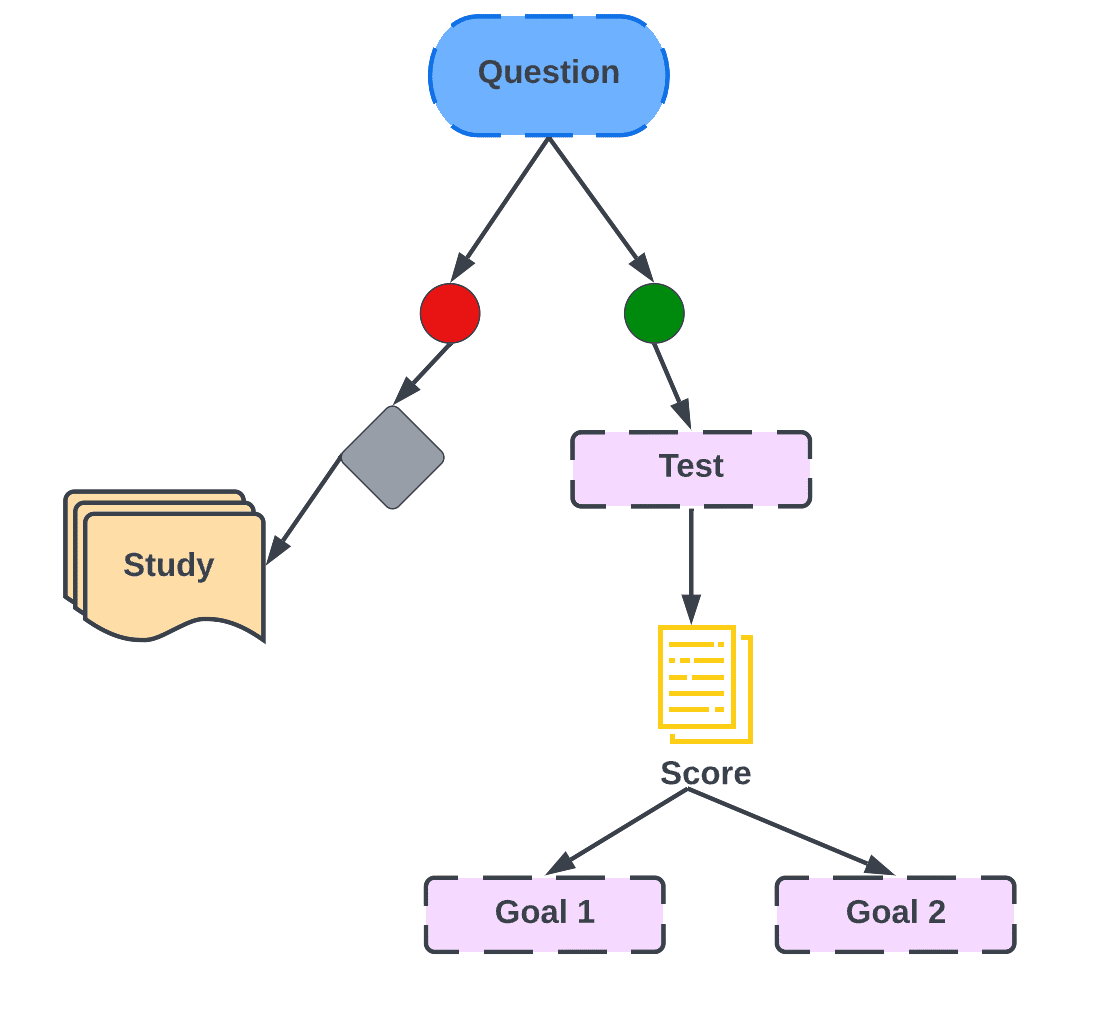

Decision Tree Algorithms

It is important to understand that in the case of Decision Tree, a model of decision is constructed based on the attribute values of the data. The decisions keep branching out and a prediction decision is made for the given record based on the attribute values.

It is commonly used for classification and regression problems to train decision trees because they are generally fast and accurate.

Some of the commonly used decision tree algorithms are:

1. C4.5 and C5.0

2. M5

3. Classification and Regression Tree

4. Decision Stump

5. Chi-squared Automatic Interaction Detection (CHAID)

6. Conditional Decision Tree

7. Iterative Dichotomiser 3 (ID3)

Clustering based Algorithms

We can use a machine learning technique known as clustering to classify data points into a specific group given a dataset with a variety of data points. In order for a data set to be grouped, we need to use a clustering algorithm. A clustering algorithm falls under the umbrella of unsupervised learning algorithms as it is based on the theoretical understanding that data points that belong to the same group have similar properties.

Some commonly used Clustering Algorithms are:

1. K-means clustering

2. Mean-shift clustering

3. Agglomerative Hierarchical clustering

4. K-medians

5. Density -Based Spatial clustering of Applications with Noisec(DBSCAN)

6. Expectation- Maximization (EM) clustering using Gaussian Mixture models (GMM)

Association Rule Based Algorithms

As a rule-based machine learning method, it is useful for discovering relationships between different features in a large dataset by using a number of rules.

It basically finds patterns in data which might include:

1. Co-occurring features

2. Correlated features

Some commonly used Association rule learning algorithms are

1. Eclat algorithm

2. Apriori algorithm

{Bread} => [Milk] | [Jam]

{Soda} => [Chips]

As shown in the above figure, given the sale of items, every time a customer buys bread, he also buys milk. Same happens with Soda, where he buys chips along with it.

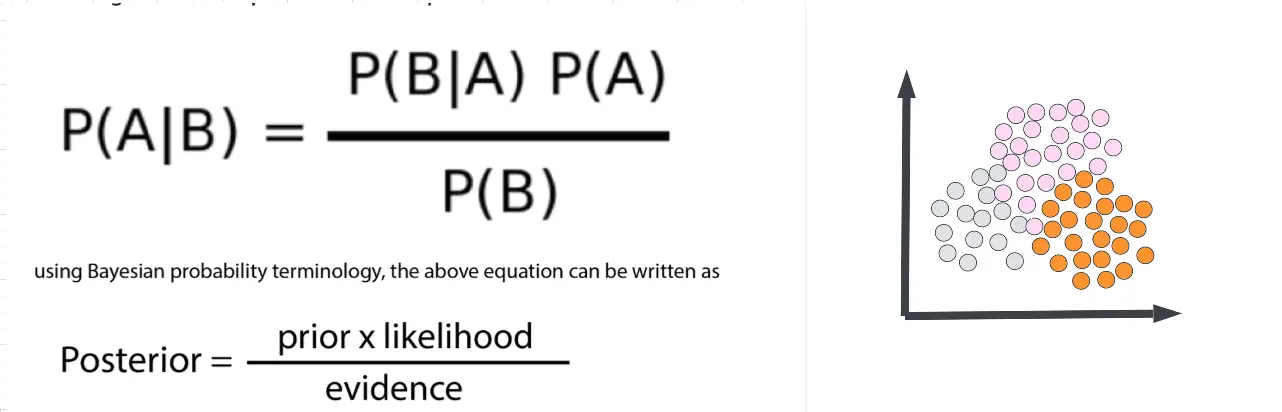

Bayesian Algorithms

In Classification and Regression, Bayesian algorithms follow the principle of Bayes’s theorem to determine the probability of an event based on prior knowledge of events and occurrences related to the event.

Consider the fact that with age, there is an increase in the chances of developing some kind of health condition.

The Bayes theorem is able to access the health condition of a person more accurately by conditioning it on the individual’s age, based on the prior knowledge of health condition in relation to age.

Some of the commonly used Bayesian Algorithms are:

1. Naïve Bayes

2. Gaussian Naïve Bayes

3. Bayesian Network

4. Bayesian Belief Network

5. Multinomial Naïve Bayes

6. Average One-Dependence Estimators (AODE)

Artificial Neural Networks

Artificial Neural Networks are branches of Artificial Intelligence which are trying to mimic the functions of the human brain. An Artificial Neural Network is a collection of connected units or nodes which are referred to as artificial neurons. Essentially, this structure depicts the connectivity of neurons in the biological brain if it is loosely modeled.

In an artificial neural network, the most important part consists of what is known as neurons, which are the cells of the human brain that are interconnected. The neurons of the neural network are interconnected just like the cells in the human brain.

IMAGE

Components of Artificial Neural Networks

ANN has 3 categories of Neurons –

1. Input Neuron.

2. Hidden Neuron.

3. Output neuron.

The other component of ANN is the activation function which is used to generate the output from the hidden neuron to the output neuron. This output generated through the activation function can be passed on to the subsequent neuron which will later become the input to those neurons.\

Some commonly used Artificial Neural Network Algorithms are:

1. Feed-Forward Neural Network

2. Radial Basis Function Network (RBFN)

3. Kohonen self-organizing neural network

4. Perceptron

5. Multi-Layer Perceptron

6. Back-Propagation

7. Stochastic Gradient Descent

8. Modular Neural Networks (MNN)

9. Hopfield Network

Deep Learning Algorithms

A Deep Learning system is a branch of Artificial Neural Networks (ANNs) which are computationally more powerful and are able to solve real-world problems like solving real-time situations that require large and complex neural networks to solve efficiently.

Some of the commonly used Deep Learning Algorithms are:

1. Convolutional Neural Networks (CNN)

2. Recurrent Neural Networks (RNN)

3. Long Short-Term Memory Network (LSTM)

4. Generative Adversarial Networks (GANs)

5. Deep Belief Networks (DBNs)

6. Autoencoders

7. Restricted Boltzmann Machines (RBMs)

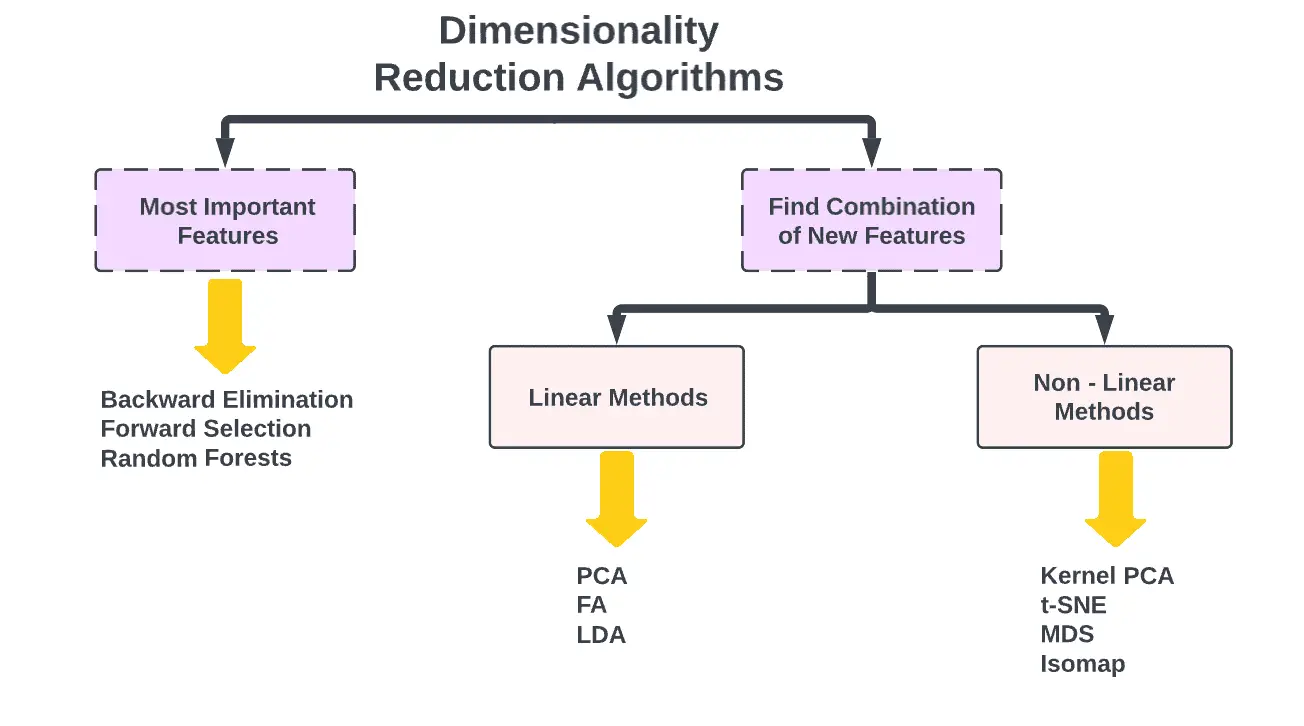

Dimensionality Reduction Algorithms

A dimension reduction method involves transforming a high-dimensional data set into a low-dimensional data set in a way that retains the meaningful properties of the original data despite the fact that the data has been transformed from a high-dimensional space to a low-dimensional space.

In essence, Dimensionality Reduction algorithms take advantage of the structure of the data in an unsupervised manner in order to summarize or describe the data by using less data or features than that are present in the original data.

Some of the commonly used Dimensionality Reduction Algorithms are:

1. Principal component analysis (PCA)

2. Non-negative matrix factorization (NMF)

3. Kernel PCA

4. Linear Discriminant Analysis (LDA)

5. Generalized Discriminant Analysis (GDA)

6. Autoencoder

7. t-SNE (T-distributed Stochastic Neighbor Embedding)

8. UMAP (Uniform Manifold Approximation and Projection)

9. Principal component Regression (PCR)

10. Partial Least Squares Regression (PLSR)

11. Sammon Mapping

12. Multidimensional Scaling (MDS)

13. Projection Pursuit

14. Mixture Discriminant Analysis (MDA)

15. Quadratic Discriminant Analysis (QDA)

16. Flexible Discriminant Analysis (FDA)

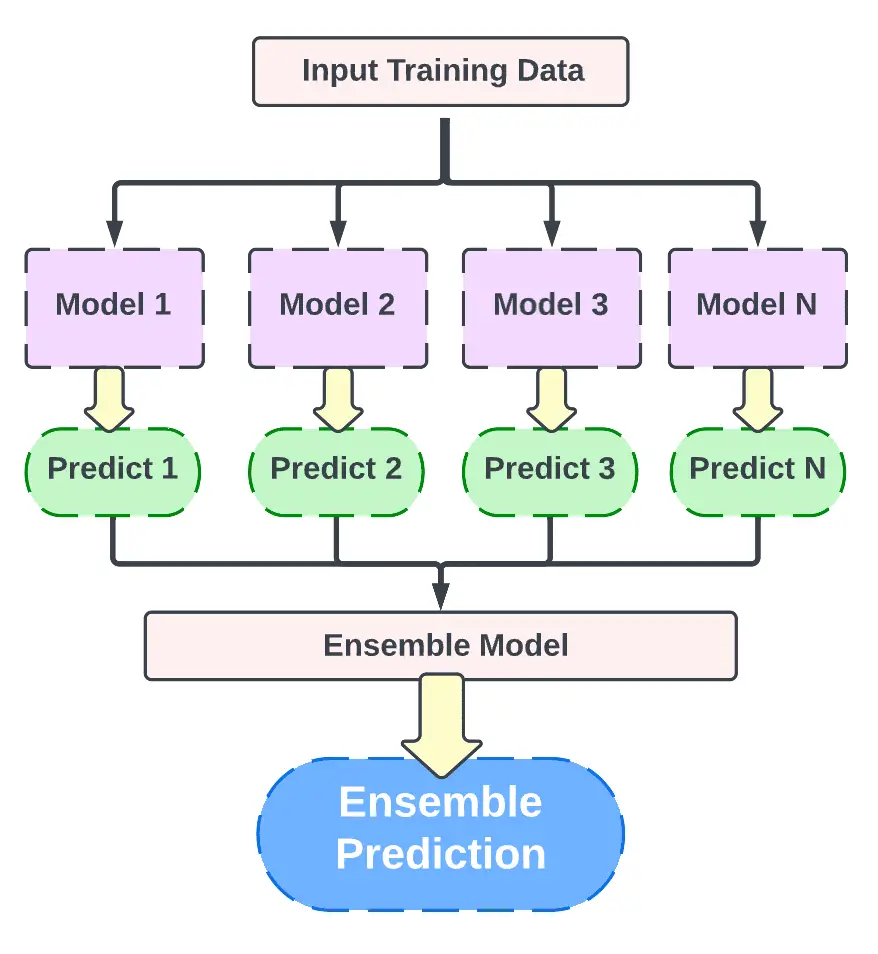

Ensemble Algorithms

Multiple models are used in ensemble techniques to improve prediction performance which is not possible with either algorithm alone.

Some of the commonly used algorithms are:

1. Boosting

2. Bootstrapped Aggregation (Bagging)

3. AdaBoost

4. Weighted Average (Blending)

5. Stacked Generalization (Stacking)

6. Gradient Boosting Machines (GBM)

7. Gradient Boosted Regression Trees (GBRT)

8. Random Forest

What machine learning algorithms can you use?

There are several factors that need to be taken into consideration when choosing the right machine learning algorithm, including, but not limited to: the amount of data, quality and diversity of the data, as well as the answers businesses wish to get from the data. Aside from accuracy, training time, parameters, data points, and many more, choosing the right algorithm also involves a combination of business needs, specifications, experiments, as well as time available.

What are the most common and popular machine learning algorithms?

Naïve Bayes Classifier Algorithm (Supervised Learning – Classification)

Based on Bayes’ theorem, this classifier requires that all values in the set of features are independent of each other, which will allow us to predict a class/category with reasonable confidence, based on a given set of features.

Although it is a simple classifier, it performs surprisingly well, and it is often used due to the fact that it outperforms more complex classification methods, despite its simplicity.

K Means Clustering Algorithm (Unsupervised Learning – Clustering)

This algorithm works by categorizing unlabeled data, or data without defined categories and groups, which is a type of unsupervised learning. K Means Clustering is one type of unsupervised learning that can be used to categorize unlabeled data. As a result of this algorithm, groups are found in the data, and the number of groups is represented by a variable K. Using the features provided, the algorithm then assigns data points to one of K groups iteratively.

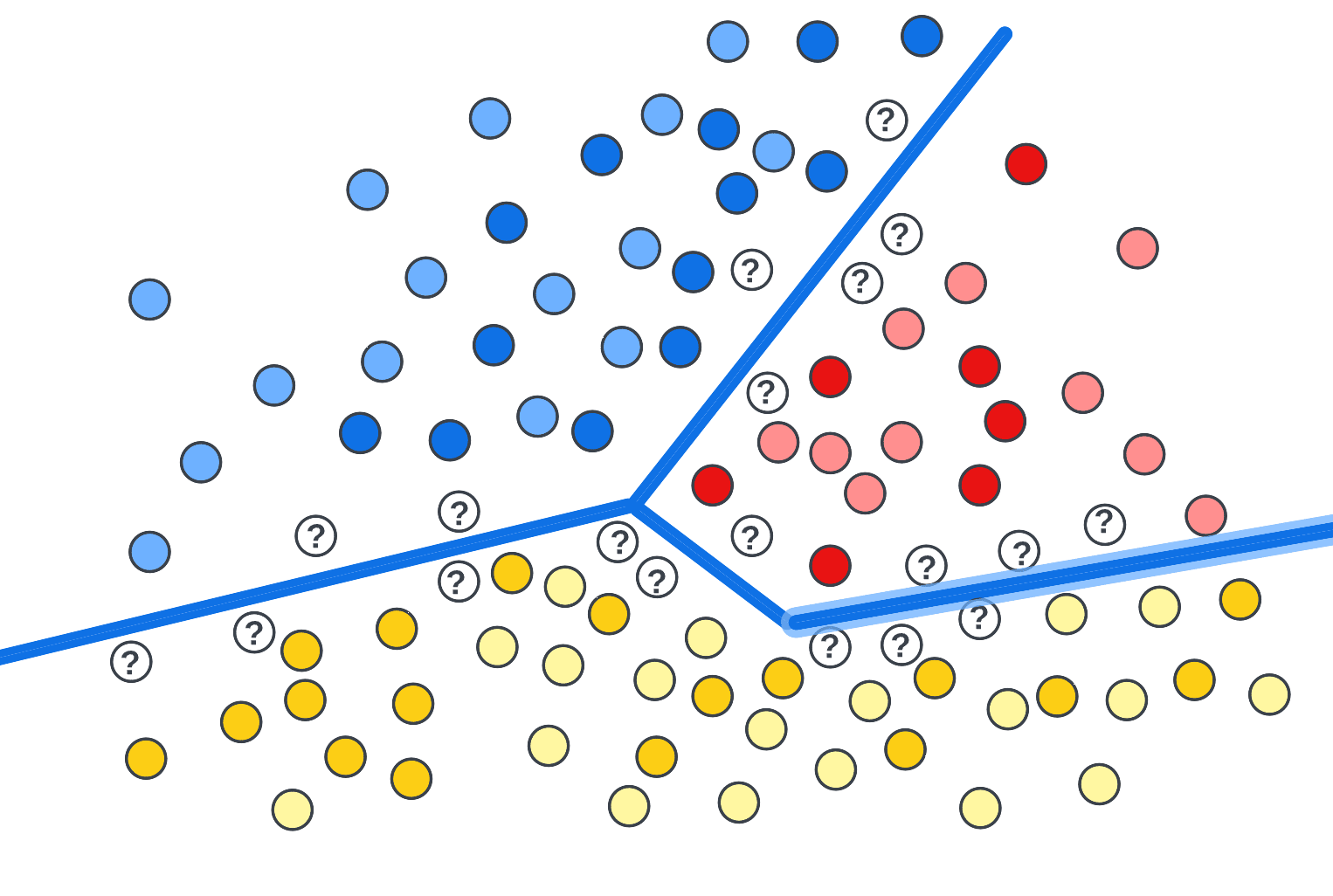

Support Vector Machine Algorithm (Supervised Learning – Classification)

There are several supervised learning models that can be used to analyze data. The Support Vector Machine algorithms analyze data for classifications and regressions. By providing a set of training examples, each set of examples is labeled as belonging to one or the other of the two categories, essentially filtering data into categories. Next, the algorithm builds a model, in which new values are assigned to either a specific category or to another category.

Linear Regression (Supervised Learning/Regression)

The simplest type of regression is a linear regression. A linear regression allows us to understand the relationship between two continuous variables by analyzing the data on a line.

Logistic Regression (Supervised learning – Classification)

The purpose of a logistic regression model is to estimate the probability that an event will occur based on the previous data that has been collected. The model is used to cover binary dependent variables, which means there are only two possible outcomes; 0 and 1.

Artificial Neural Networks (Reinforcement Learning)

An artificial neural network (ANN) comprises a series of ‘units’ arranged in a series of layers, each of which connects to layers on either side. An ANN is an interconnected system of processing elements that solve specific problems in an asynchronous manner. ANNs are modeled after biological systems, such as the brain.

They are also extremely useful for modeling non-linear relationships in high-dimensional data or for difficult-to-understand relationships between input variables. ANNs learn through examples and experience.

Decision Trees (Supervised Learning – Classification/Regression)

As the name suggests, a decision tree is a type of flowchart structure that uses a branching method to illustrate the possible outcomes of a particular decision. Each node of the tree represents a test conducted on a specific variable, and each branch represents the outcome of the test.

Random Forests (Supervised Learning – Classification/Regression)

In the field of ensemble learning, random forests, also known as random decision forests, combine multiple algorithms to create better results for classification, regression and other tasks. There are several weak classifiers in the algorithm, but if they are combined, they can produce excellent results. The algorithm begins by creating a tree-like model of decisions (a decision tree), and then adding inputs to it at the top. Thereafter, the data is segmented into progressively smaller sets, in accordance with specific variables, as it travels down the tree.

Nearest Neighbors (Supervised Learning)

Basically, the algorithm identifies which group a data point is in based on the data points surrounding it. It essentially looks at the data points around a single point to determine what group it belongs to.

The right machine learning algorithms are crucial to the success of your business’ analytics and there are many factors to consider when making the right choice.

Conclusion

Supervised learning, as a dominant paradigm in machine learning, plays a critical role in the development of intelligent systems capable of making predictions or decisions based on historical data. Through the use of labeled training data, supervised learning algorithms are adept at discerning patterns and relationships within datasets, which they can later apply to unseen data. Its applications are diverse and vast, ranging from image recognition and natural language processing to financial forecasting and healthcare diagnostics. As technology progresses, it is expected that supervised learning will continue to evolve, becoming more efficient and accurate. However, it is imperative to address challenges such as overfitting, data quality, and ethical considerations to ensure that supervised learning continues to be a reliable and beneficial tool in the broader spectrum of artificial intelligence.

References

Perez, C. Machine Learning Techniques: SUPERVISED LEARNING and CLASSIFICATION. EXAMPLES with MATLAB. Independently Published, 2019.

Ramasubramanian, Karthik, and Jojo Moolayil. Applied Supervised Learning with R: Use Machine Learning Libraries of R to Build Models That Solve Business Problems and Predict Future Trends. Packt Publishing Ltd, 2019.

“Supervised Learning.” Neural Smithing, The MIT Press, 1999, http://dx.doi.org/10.7551/mitpress/4937.003.0003. Accessed 14 June 2023.